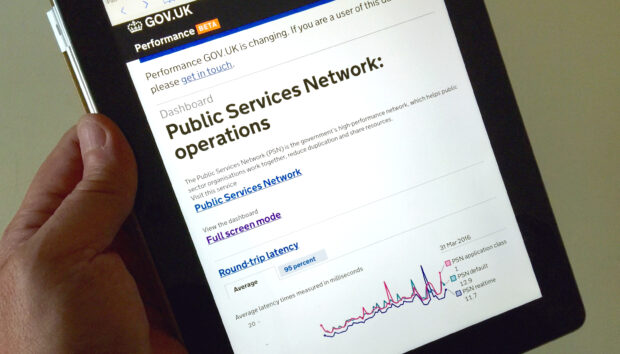

At GDS we’ve started publishing the performance data we get from monitoring the network performance of the Public Services Network (PSN). It’s another step in our efforts to make things simpler and clearer for PSN customers across the country.

The ability to set network performance targets, as well as monitor and maintain them, is a key characteristic of the PSN. And there’s good news - the data shows that the PSN is performing better than we originally planned and well within the design targets we set our network providers.

Why it's useful

When you buy any network service, you’ll sometimes pay for a specific performance level that your service provider will commit to (usually within the agreement you’ll both sign up to).

If what your provider actually delivers is outside of these levels you’ll want to know why, understand where the issues are and, ultimately, get some compensation because you haven’t got what you paid for.

Sometimes, the performance levels agreed by a service provider can be quite conservative and a typical user experience is often much better than the targets stated in the agreement.

With this performance data, we can show you the network performance that your users actually experience on the PSN. It means you can see what your users would typically get as a baseline, and that gives you a better understanding if you need to consider additional levels of service.

What we're doing with the data

We’ve only just started collecting this performance data, and it will take a little while to see what “normal” looks like, but there’s already some interesting patterns starting to show up.

For example, there’s a suggestion of a weekly pattern in the data, and we can also see that there was some network reconfiguration on a couple of days that caused a few packets to get delayed or dropped.

Knowing what “normal” looks like helps us spot things that are unusual, and enables us to do something quickly about them. Of course, it’s not a substitute for what the network providers are doing in real time to detect and rectify incidents, but it does give us a way to dig into things with service provides and investigate retrospectively anything significant that looks out of the ordinary.

Understanding the numbers

Network traffic is prioritised into different classes of service according to how quickly it needs to get to its recipient. There can be many different classes of service, but we’ve agreed a standard set across PSN, and selected three to report:

- PSN real time - the highest priority used for voice calls

- PSN application class 1 - used for media streaming

- PSN default - for traffic that can wait a few milliseconds

The targets we set for each class are slightly different so that we get the best out of the network for all users.

Using the graphs

The PSN network performance dashboard is divided into a number of graphs, which show:

Round trip latency: the time it takes for data to travel across the network and back. We often experience high latency when talking to someone across the other side of the world, but it can also be caused by traffic not taking the most direct path.

We measure the time taken for a signal to cross at least four different PSN providers’ networks, end-to-end, and then return. Our end-to-end target for this is around 60 milliseconds, and you can see that the average figure we actually get is 11-15 milliseconds.

Jitter: Networks deliver lots of different types of data. Some data just needs to get there eventually, like downloading a file. Other applications like media streaming need the data to be delivered in a timely fashion otherwise you get problems like glitches when you are streaming a video. These glitches occur because of something called “jitter” to give it it’s industry-speak name. It’s very important to keep jitter down, especially for voice and media streaming. It quickly becomes difficult for us to understand speech if jitter is too high.

Here, we measure the jitter in each direction across at least four different PSN providers’ networks. Our end-to-end target for this is 7-9 milliseconds for PSN real time and PSN application class 1 and, as you can see from the data, the average figure we actually get is less than a quarter of a millisecond.

Packets lost: Occasionally, some data packets travelling across a network are lost or dropped. This can be caused by network congestion or faulty equipment and cabling. You don’t usually notice packet loss because your software often compensates by requesting the missing information. Nevertheless, packet loss is a good indicator of the health of the network. Our end-to-end target for this is 0.4% for PSN real time and PSN application class 1, and you can see that the figure we usually get is below 0.3%.

You can get more details, including the different targets set for each service class on the PSN Quality of Service page on GOV.UK.

What next?

We’ll be rolling out additional measurement units over the next few months that will help enrich the overall performance data picture and the useful data we can feedback to PSN customers. We’re also getting each PSN network service provider to send us their own reports, so we can align our end-to-end reports with their data, and that will help us work with them to address any issues that may arise.

It’s going to be a developing area for us, and we’ll keep you up to date as things evolve and more information becomes available to us.

If you’d like more information about our performance monitoring data, or about how we collect the data or how you can use it, then please contact us at the PSN team contact centre.